For this next installment in my series about FTP clients, I want to take a look at Globalscape's CuteFTP, which is available from the following URL:

http://www.cuteftp.net/

CuteFTP is a for-retail product that used to be available in several editions - Lite, Home, and Pro - but at the time of this blog CuteFTP was only available in a single edition which combined all of the features. With that in mind, for this blog post I used CuteFTP 9.0.5.

CuteFTP 9.0 Overview

I should start off with a quick side note: it's kind of embarrassing that it has taken me so long to review CuteFTP, because CuteFTP has been my primary FTP client at one time or other over the past 15 years or so. That being said, it has been a few years since I had last used CuteFTP, so I was curious to see what had changed.

|

| Fig. 1 - The Help/About dialog in CuteFTP 9.0. |

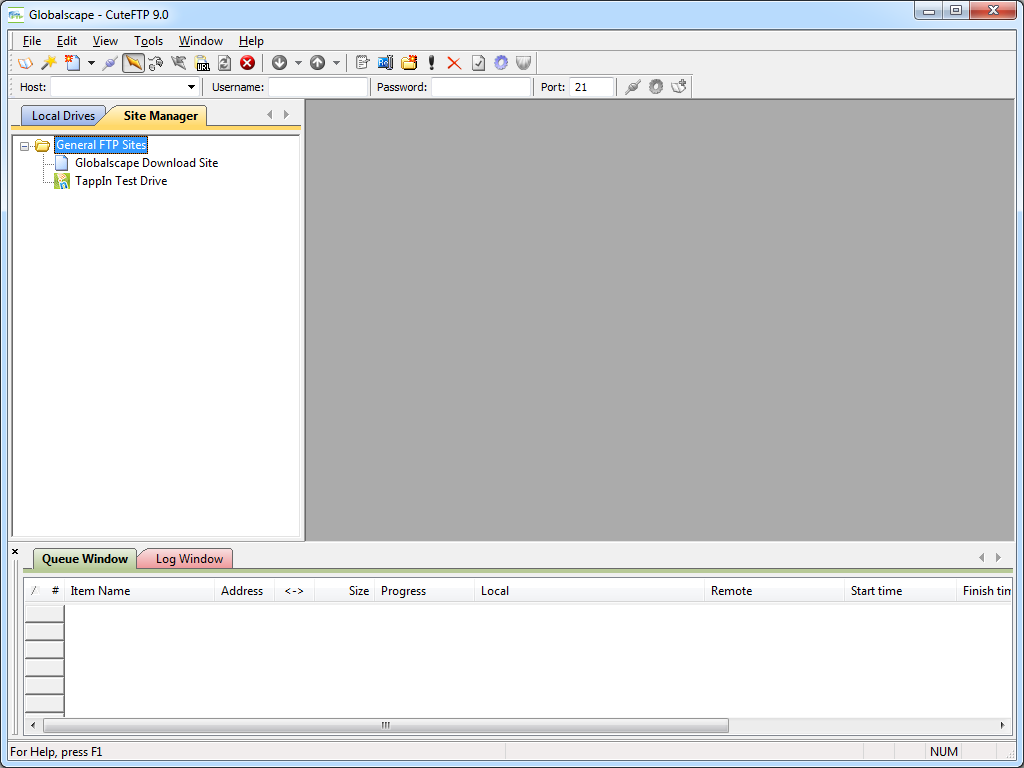

To start things off, when you first install CuteFTP 9.0, it opens a traditional explorer-style view with the Site Manager displayed.

|

| Fig. 2 - CuteFTP 9.0's Site Manager. |

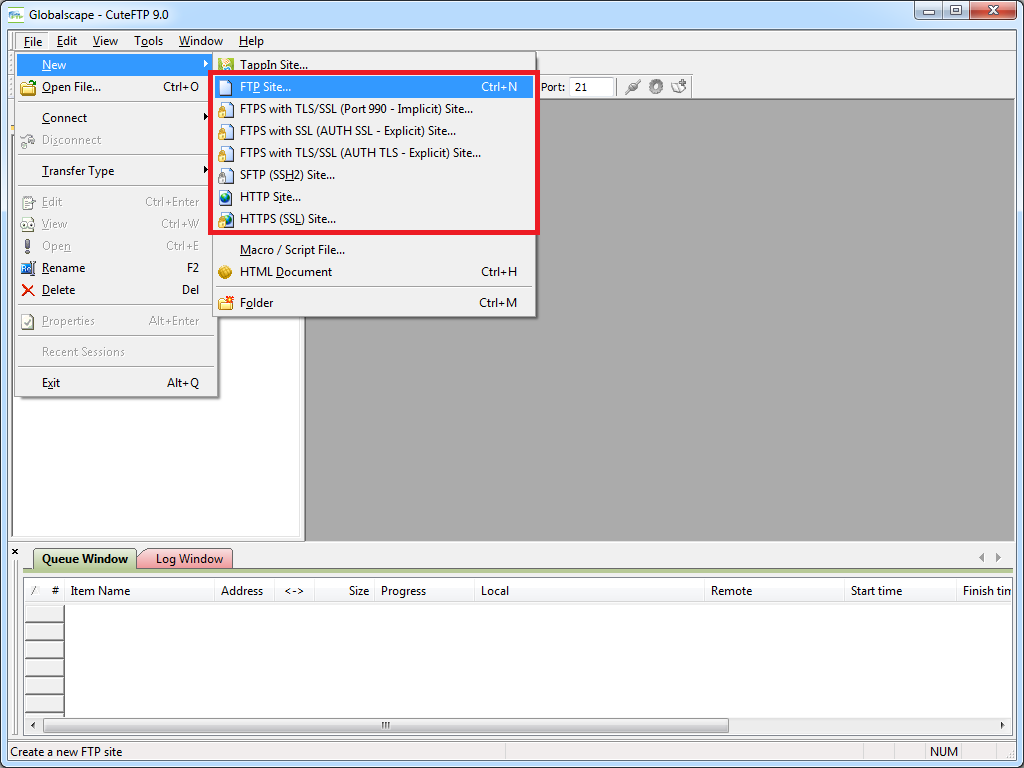

When you click File -> New, you are presented with a variety of connection options: FTP, FTPS, SFTP, HTTP, etc.

|

| Fig. 3 - Creating a new connection. |

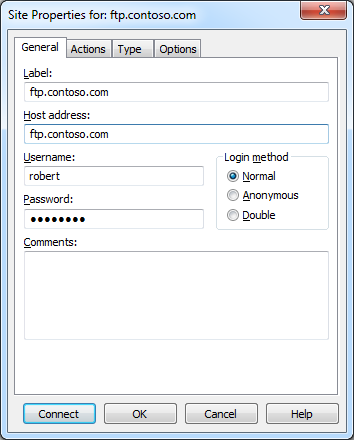

When the Site Properties dialog is displayed during the creation of a new site connection, you have many of the options that you would expect, including the ability to change the FTP connection type after-the-fact; e.g. FTP, FTPS, SFTP, etc.

|

| Fig. 4 - Site connection properties. |

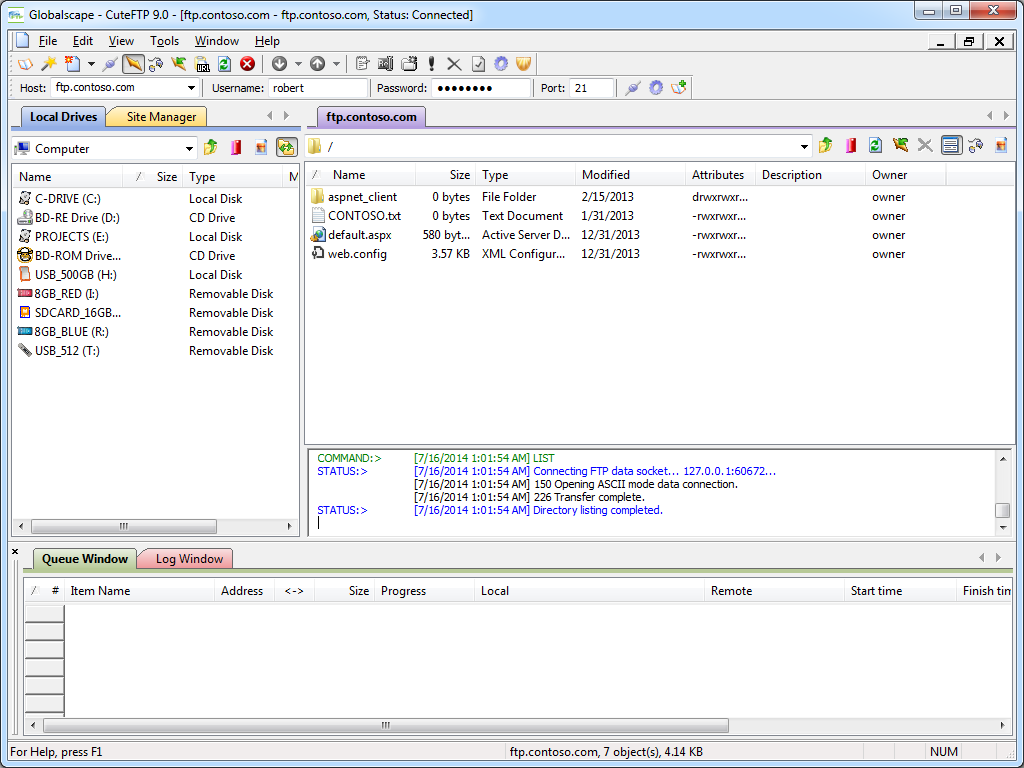

Once an FTP connection has been established, the CuteFTP connection display is pretty much what you would expect in any graphical FTP client.

|

| Fig. 5 - FTP connection established. |

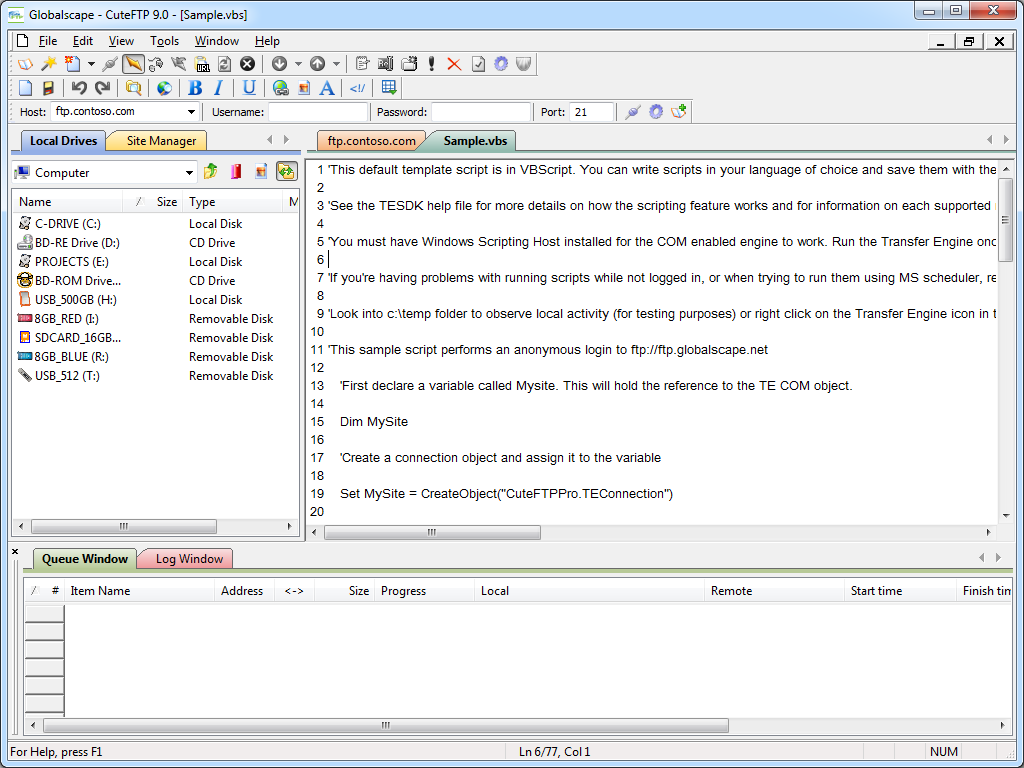

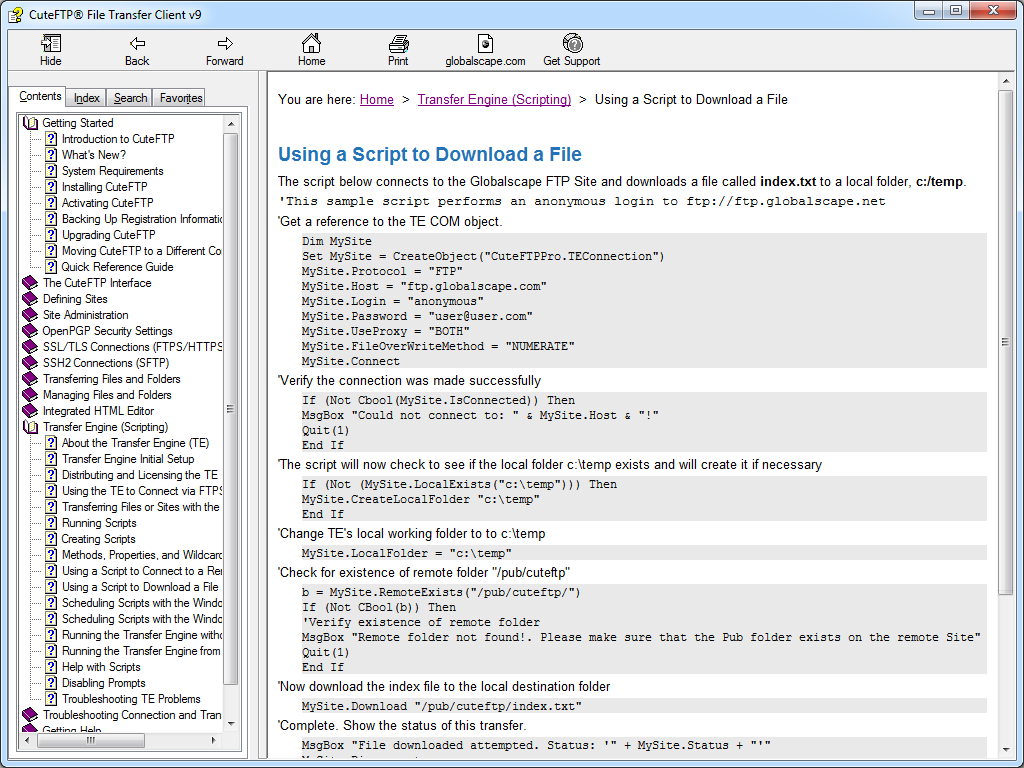

A cool feature for me is that CuteFTP 9.0 supports a COM interface, (which is called the Transfer Engine), so you can automate CuteFTP commands through .NET or a scripting language. What was specifically cool about CuteFTP's scripting interface was the inclusion of several practical samples in the help file that is installed with the application.

|

| Fig. 6 - Scripting CuteFTP. |

|

| Fig. 7 - Scripting samples in the CuteFTP help file. |

Anyone who has read my blogs in the past knows that I am also a big fan of WebDAV, and an interesting feature of CuteFTP is built-in WebDAV integration. Of course, this functionality is a little redundant if you are using any version of Windows starting from Windows XP and later since WebDAV integration is built-in to the operating system via the WebDAV redirector, (which lets you map drive letters to WebDAV-enabled websites). But still - it's cool that CuteFTP is trying to be an all-encompassing transfer client.

|

| Fig. 8 - Creating a WebDAV connection. |

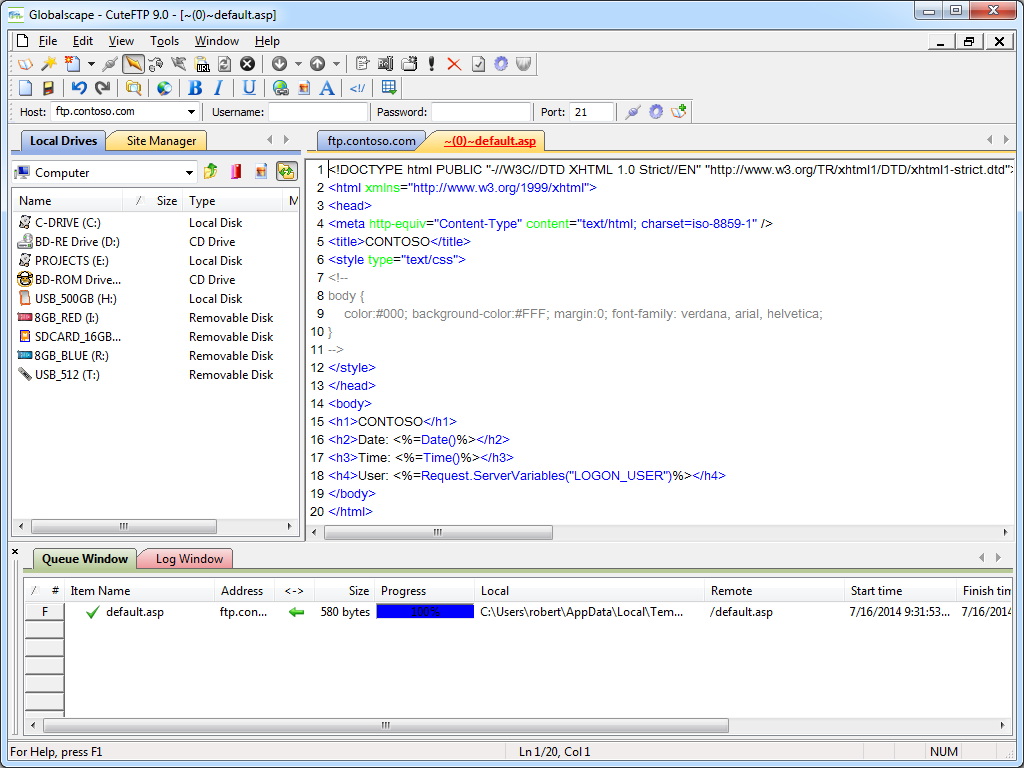

One last cool feature that I should call out in the overview is the integrated HTML editor, which is pretty handy. I could see where this might be useful on a system where you use FTP and you don't want to bother installing a separate editor.

|

| Fig. 9 - CuteFTP's Integrated HTML editor. |

Using CuteFTP 9.0 with FTP over SSL (FTPS)

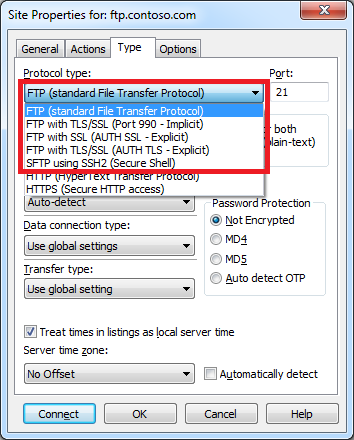

CuteFTP 9.0 has built-in support for FTP over SSL (FTPS), and it supports both Explicit and Implicit FTPS. To specify which type of encryption to use for FTPS, you need to choose the appropriate option from the Protocol type drop-down menu in the Site Properties dialog box for an FTP site.

|

| Fig. 10 - Specifying the FTPS encryption. |

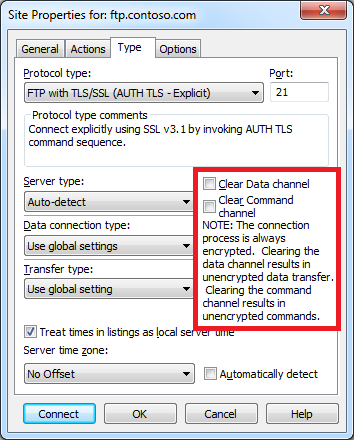

I was really happy to discover that I could use CuteFTP 9.0 to configure an FTP connection to drop out of FTPS on either the data channel or command channel once a connection is established. This is a very flexible design, because it allows you to configure FTPS for just your user credentials with no data and no post-login commands, or all commands and no data, or all data and all commands, etc.

|

| Fig. 11 - Specifying additional FTPS options. |

Using Using CuteFTP 9.0 with True FTP Hosts

CuteFTP 9.0 does not have built-in support for the HOST command that is specified in RFC 7151, nor does CuteFTP have a first-class way to specify pre-login commands for a connection.

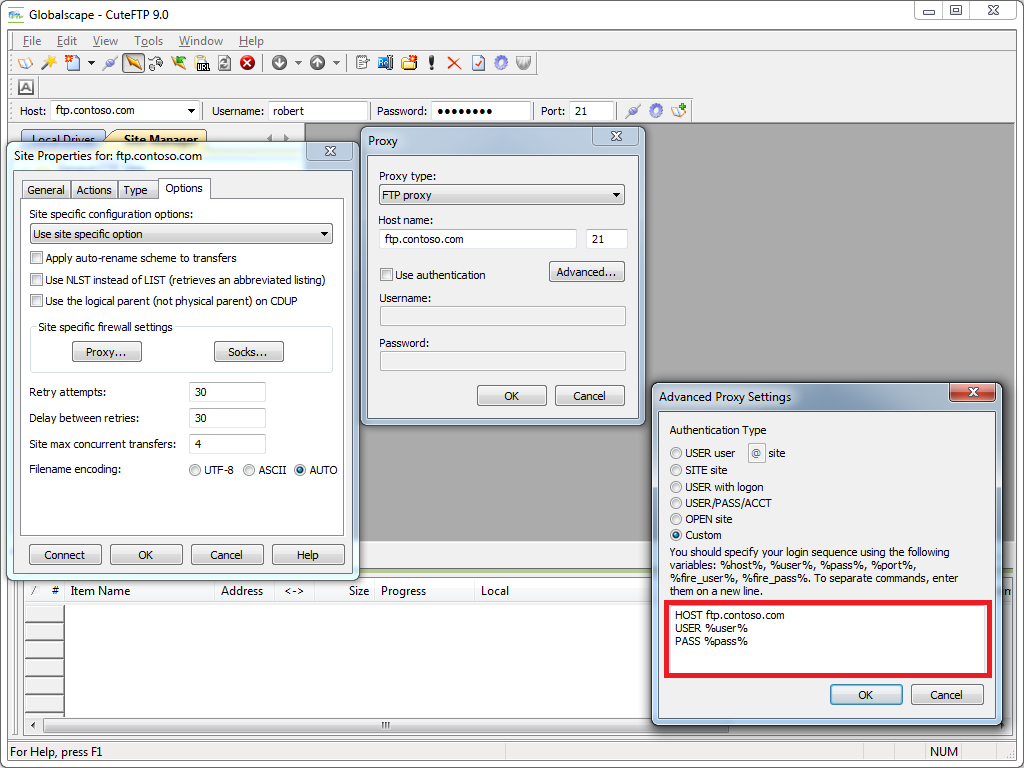

But that being said, I was able find a way to configure CuteFTP 9.0 to send a HOST command for a connection by specifying custom advanced proxy commands. Here are the steps to pull this off:

- Bring up the properties dialog for an FTP site in the CuteFTP Site Manager

- Click the Options

- Choose Use site specific option in the drop-down

- Enter your FTP domain name in the Host name field

- Click the Advanced button

- Specify Custom for the Authentication Type

- Enter the following information:

HOST ftp.example.com

USER %user%

PASS %pass%

Where ftp.example.com is your FTP domain name

- Click OK for all of the open dialog boxes

|

| Fig. 12 - Specifying a true FTP hostname via custom proxy settings. |

Note: I could not get this workaround to successfully connect with FTPS sessions; I could only get it to work with regular (non-encrypted) FTP sessions.

Using Using CuteFTP 9.0 with Virtual FTP Hosts

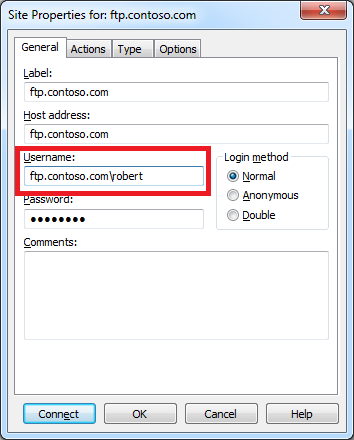

CuteFTP 9.0's login settings allow you to specify the virtual host name as part of the user credentials by using syntax like "ftp.example.com|username" or "ftp.example.com\username". So if you don't want to use the workaround that I listed earlier, or you need to use FTPS, you can use virtual FTP hosts with CuteFTP 9.0.

|

| Fig. 13 - Specifying an FTP virtual host. |

Scorecard for CuteFTP 9.0

This concludes my quick look at a few of the FTP features that are available with CuteFTP 9.0, and here are the scorecard results:

Client

Name | Directory

Browsing | Explicit

FTPS | Implicit

FTPS | Virtual

Hosts | True

HOSTs | Site

Manager | Extensibility |

|---|

| CuteFTP 9.0.5 |

Rich |

Y |

Y |

Y |

Y/N1 |

Y |

N/A2 |

Notes:

- As I mentioned earlier, support for true HOSTs is not built-in, but I provided a workaround that seems to work great for FTP sessions, although I could not get it work work with FTPS sessions.

- I could not find a way to extend the functionality of CuteFTP 9.0; but as I said earlier, it provides a COM for scripting/automating FTP functionality.

|

That wraps things up for today's review of CuteFTP 9.0. Your key take-aways should be: CuteFTP is good FTP client; it has added some great features over the years, and as with most of the FTP clients that I have reviewed, I am sure that I have barely scratched the surface of its potential.

Note: This blog was originally posted at http://blogs.msdn.com/robert_mcmurray/